insira a descrição da imagem aqui

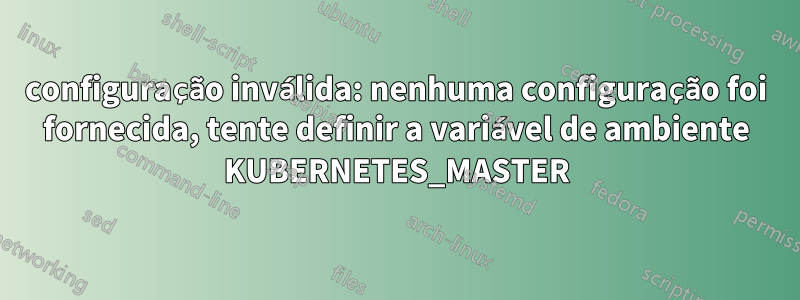

Error: Kubernetes cluster unreachable: invalid configuration: no configuration has been provided, try setting KUBERNETES_MASTER environment variable

Error: Get "http://localhost/api/v1/namespaces/devcluster-ns": dial tcp [::1]:80: connectex: No connection could be made because the target machine actively refused it.

Error: Get "http://localhost/api/v1/namespaces/devcluster-ns/secrets/devcluster-storage-secret": dial tcp [::1]:80: connectex: No connection could be made because the target machine actively refused it.

Configurei o Cluster Azure AKS usando Terraform. Após a configuração, ocorreram algumas modificações no código. As modificações em outras partes funcionaram normalmente, mas ao aumentar ou diminuir o número de pods em "default_node_pool", encontramos o seguinte erro.

Em particular, o problema do provedor Kubernetes parece ocorrer ao aumentar ou diminuir "os_disk_size_gb, node_count" de default_node_pool.

resource "azurerm_kubernetes_cluster" "aks" {

name = "${var.cluster_name}-aks"

location = var.location

resource_group_name = data.azurerm_resource_group.aks-rg.name

node_resource_group = "${var.rgname}-aksnode"

dns_prefix = "${var.cluster_name}-aks"

kubernetes_version = var.aks_version

private_cluster_enabled = var.private_cluster_enabled

private_cluster_public_fqdn_enabled = var.private_cluster_public_fqdn_enabled

private_dns_zone_id = var.private_dns_zone_id

default_node_pool {

name = "syspool01"

vm_size = "${var.agents_size}"

os_disk_size_gb = "${var.os_disk_size_gb}"

node_count = "${var.agents_count}"

vnet_subnet_id = data.azurerm_subnet.subnet.id

zones = [1, 2, 3]

kubelet_disk_type = "OS"

os_sku = "Ubuntu"

os_disk_type = "Managed"

ultra_ssd_enabled = "false"

max_pods = "${var.max_pods}"

only_critical_addons_enabled = "true"

# enable_auto_scaling = true

# max_count = ${var.max_count}

# min_count = ${var.min_count}

}

Especifiquei corretamente o provedor de kubernetes e helm em Provider.tf conforme abaixo e não houve problema na primeira distribuição.

data "azurerm_kubernetes_cluster" "credentials" {

name = azurerm_kubernetes_cluster.aks.name

resource_group_name = data.azurerm_resource_group.aks-rg.name

}

provider "kubernetes" {

host = data.azurerm_kubernetes_cluster.credentials.kube_config[0].host

username = data.azurerm_kubernetes_cluster.credentials.kube_config[0].username

password = data.azurerm_kubernetes_cluster.credentials.kube_config[0].password

client_certificate = base64decode(data.azurerm_kubernetes_cluster.credentials.kube_config[0].client_certificate, )

client_key = base64decode(data.azurerm_kubernetes_cluster.credentials.kube_config[0].client_key, )

cluster_ca_certificate = base64decode(data.azurerm_kubernetes_cluster.credentials.kube_config[0].cluster_ca_certificate, )

}

provider "helm" {

kubernetes {

host = data.azurerm_kubernetes_cluster.credentials.kube_config[0].host

# username = data.azurerm_kubernetes_cluster.credentials.kube_config[0].username

# password = data.azurerm_kubernetes_cluster.credentials.kube_config[0].password

client_certificate = base64decode(data.azurerm_kubernetes_cluster.credentials.kube_config[0].client_certificate, )

client_key = base64decode(data.azurerm_kubernetes_cluster.credentials.kube_config[0].client_key, )

cluster_ca_certificate = base64decode(data.azurerm_kubernetes_cluster.credentials.kube_config[0].cluster_ca_certificate, )

}

}

A atualização do terrafrom, o plano do terraform e o aplicativo terraform devem operar normalmente.

Descobertas adicionais incluem: Em provedor.tf, leia "/.Adicione e distribua "kube/config". Isso parece funcionar bem. Mas o que eu quero é "/.Deve funcionar bem sem especificar "kube/config".

provider "kubernetes" {

config_path = "~/.kube/config". # <=== add this

host = data.azurerm_kubernetes_cluster.credentials.kube_config[0].host

username = data.azurerm_kubernetes_cluster.credentials.kube_config[0].username

password = data.azurerm_kubernetes_cluster.credentials.kube_config[0].password

client_certificate = base64decode(data.azurerm_kubernetes_cluster.credentials.kube_config[0].client_certificate, )

client_key = base64decode(data.azurerm_kubernetes_cluster.credentials.kube_config[0].client_key, )

cluster_ca_certificate = base64decode(data.azurerm_kubernetes_cluster.credentials.kube_config[0].cluster_ca_certificate, )

}

Como esperado, quando terraform plan, terraform update, terraform application, "data.azurm_kubernetes_cluster.credentials" não consigo ler isso?